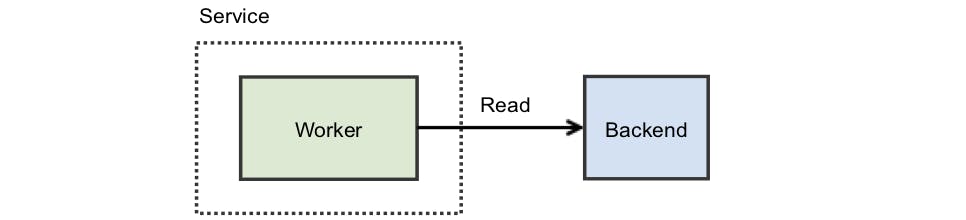

Suppose you’re writing a simple service: it handles inbound requests from your users, but doesn’t hold any data itself. This means that in order to handle any inbound requests it needs to refer to a backend to actually fetch whatever is required. This is a great feature as it means your service is stateless) — it’s easier to test, simpler to scale, and typically easier to understand. In our story, you’re also lucky as you only handle a single request at a time with a single worker doing the work. Your service diagram might look like this:

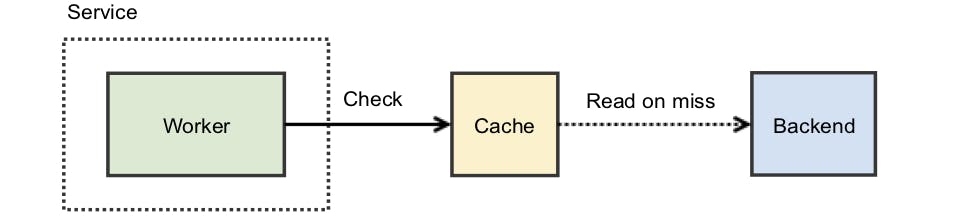

All is well but latency is an issue. The backend you use is slow. To address this, you notice that the vast majority — perhaps 90% — of the requests are the same. One common solution is to introduce a cache: before hitting the backend, you check your cache and use that value if present.

With the above design, 90% of incoming requests do not hit the backend. You’ve just reduced your resource requirements by 10x! Of course, there are some additional details you now need to consider. Namely, what happens if the cache is erased, or if a brand new request appears? In either scenario, your assumption of 90% similarity is broken. There are many ways you may choose to work around this, for example: If the cache is erased because you’ve had to restart the service, you could consider making the cache external:

a) Leveraging redis, or some other external caching service. b) Using local disk as something “external” to your service you can load on startup. c) Using something clever with shared memory segments to restart without discarding the in-memory state. If the request is a brand new feature, you could handle it incrementally: a) Deploy the new client that makes the request slowly, or adjust your distribution plans so it’s not all at once. b) Have your service perform some sort of warmup, perhaps with fake requests emulating what you expect.

Furthermore, your service might not actually live alone. You might have lots of other instances of it, and an existing instance could be communicated with to accelerate your cache warmup routine.

All of these add complexity, some of them add intricate failure modes or make your service just more troublesome to deal with (what do you mean I can’t dial everything to 100% immediately?). Chances are your cache warms pretty quickly, and the less boxes on your service diagram the less things go wrong. Ultimately, you’re happy with doing nothing.

The Reality

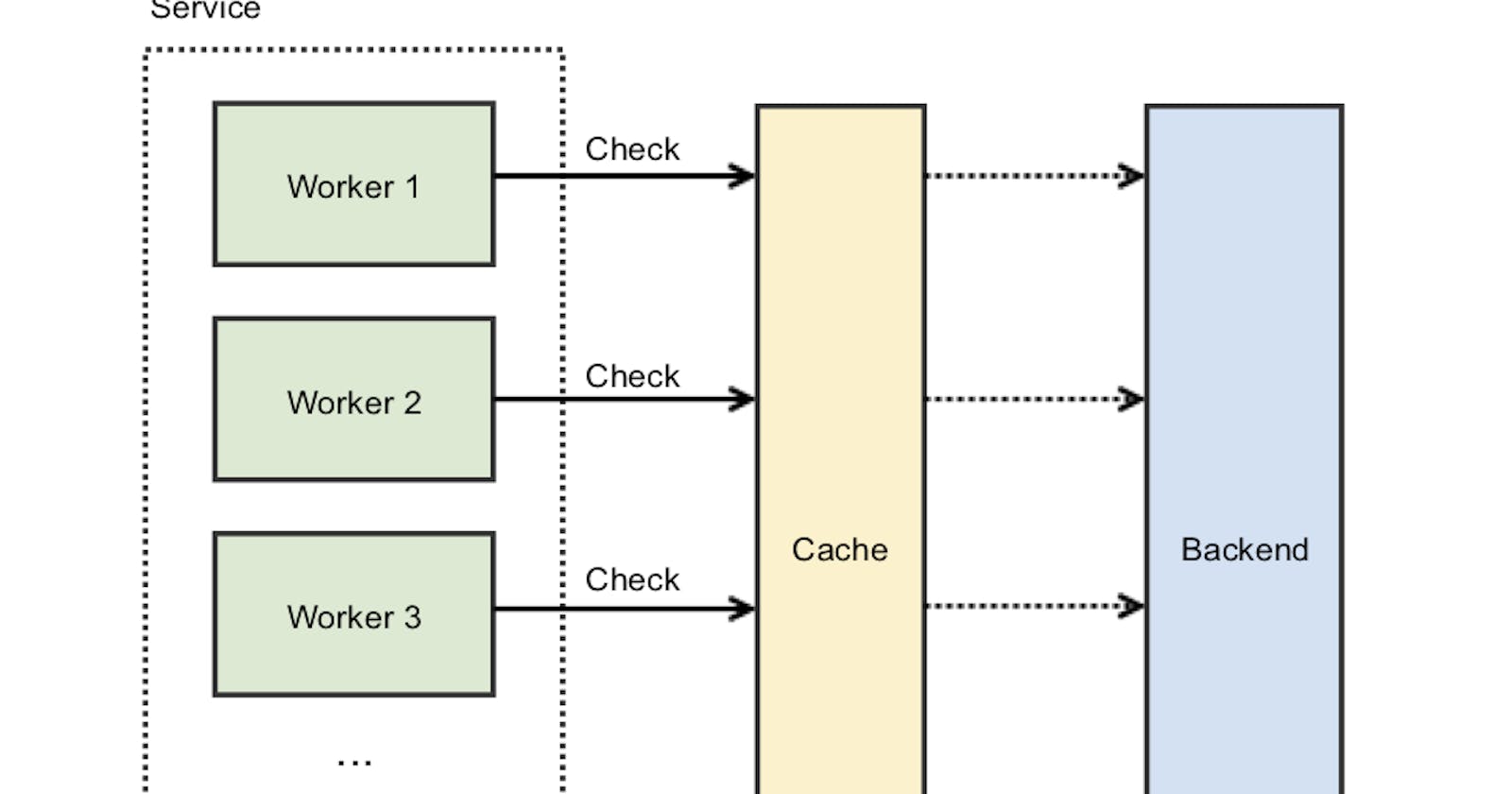

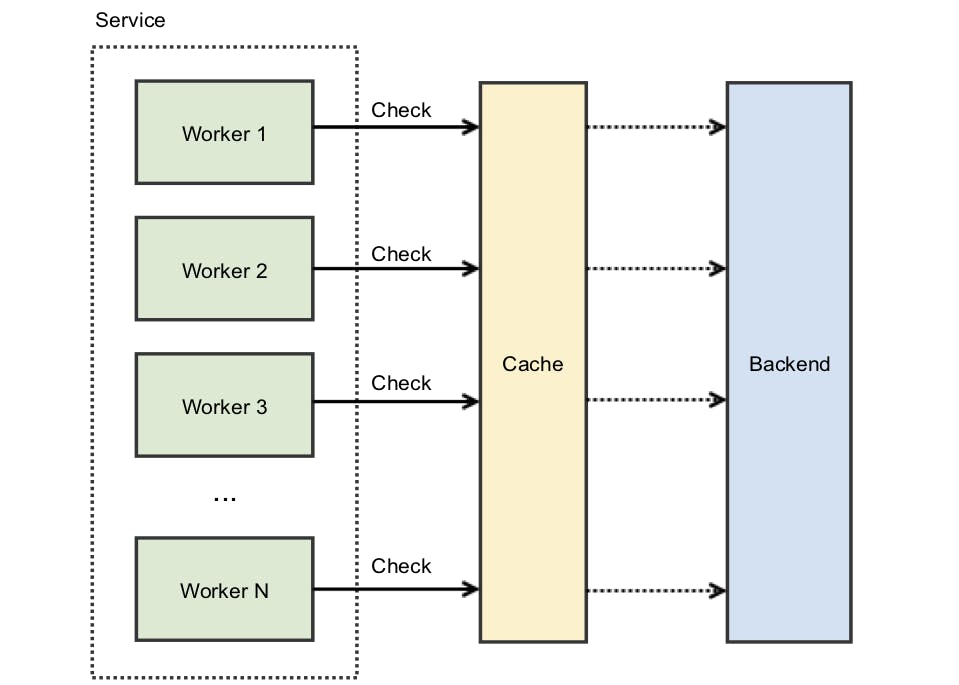

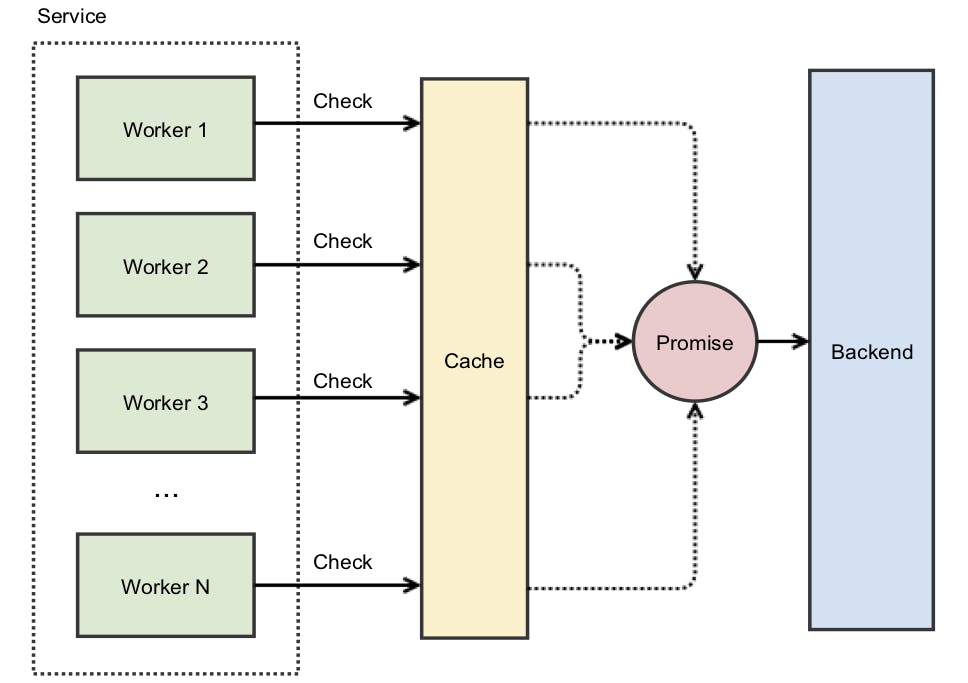

The above diagram isn’t really what your service looks like. It’s a runaway success and each service is doing quite a lot of work. Your service actually looks like this:

However, the cache-empty case is now much more problematic. To illustrate: if your cache is hit with 100 concurrent requests, then, since the cache is empty, all of them will get a cache-miss at the one moment, resulting in 100 individual requests to the backend. If the backend is unable to handle this surge of concurrent requests (ex: capacity constraints), additional problems arise. This is what’s sometimes called a thundering herd (Courtsey: instragram engineering).

The Solution

Solution is to cached promises. A Promise is an object that represents a unit of work producing a result that will be fulfilled at some point in the future. You can “wait” on this promise to be complete, and when it is you can fetch the resulting value. When there are n concurrent request do an atomic check weather the key exists in cache. Redis transaction can be used to do this. Atomically set a special value to signal other nodes that the key is reserved. Thus a leader from the n requests can be appointed. This leader node can then do the async request to backend. Other node can simply listen to the cache set event on this key and resolve their own responses.

So backend will receive only one request instead of 1000s.

memoise npm

I have created a npm lib memoise to solve this problem. By leveraging redis it simulate cached promises and do only 1 backend for n concurrent requests. Pull requests are welcome at github.